New AI model both threatens and enhances IT security

Anthropic’s new AI model – Mythos – is said to be so effective at identifying and exploiting IT security vulnerabilities that it’ll never see the light of day. But is there more to this than just a PR stunt?

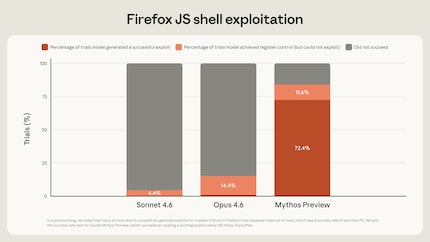

Anthropic has developed a new AI model called Claude Mythos Preview (known as Mythos for short). It’s a general-purpose large language model (LLM), but according to Anthropic, it’s particularly effective at detecting IT security vulnerabilities. Mythos is said to have already discovered thousands of serious, previously unknown vulnerabilities, including some found in all major operating systems and web browsers.

Anthropic claims that, not only is Mythos able to identify security vulnerabilities, it can also patch some of them. On the other hand, the tool can also programme working exploits on demand – i.e. code that actually exploits the vulnerability. This means Mythos has the potential to drastically improve IT security – but also to cause serious damage were it to fall into the wrong hands.

Source: Anthropic

The model isn’t currently available to the public. Instead, Anthropic has launched Project Glasswing, a collaborative effort where selected participants are given access to the model so they can use it to fix security issues.

Among them are Apple, Google, Microsoft and the Linux Foundation – the developers of the most important operating systems and web browsers. Also joining them are Amazon Web Services, Anthropic, Broadcom, Cisco, CrowdStrike, JPMorgan Chase, Nvidia and Palo Alto Networks, plus over 40 other companies and organisations that aren’t named.

Compelling examples

Mythos even finds security vulnerabilities in software that’s considered extremely secure and has been tested countless times. As an example, Anthropic cites a bug in the OpenBSD operating system that apparently went undetected for 27 years. All it took was a remote connection to crash a computer. OpenBSD’s often used for servers and firewalls because of its high security standard.

Another example concerns the FFmpeg video software. A bug in it had gone unnoticed for 16 years despite millions of automatic security scans. A third example relates to the Linux kernel. According to Anthropic, a hacker managed to gain complete control over a computer by exploiting a combination of multiple vulnerabilities.

Since these issues have since been fixed, Anthropic has published the technical details of this case.

More answers needed

Anthropic plans to go public this autumn, and needs capital to do so. The company’s report should also be read in this light: as a signal to potential investors that Anthropic’s developing the most cutting-edge tools. But these examples are compelling, and the claim that Mythos is particularly effective when it comes to IT security seems credible overall.

The decision not to release the tool at this time is likely a good call as well. A tool capable of exposing thousands of serious security vulnerabilities in one fell swoop must under no circumstances fall into the wrong hands. But if the developers fix the vulnerabilities quickly, it could actually improve security.

However, some questions still need to be answered. First, whether the software can really be kept under wraps, and whether Anthropic even wants that. After all, Mythos wasn’t developed as a security tool, but as a general-purpose AI solution for widespread use. But even if Mythos remains unreleased, competing models are likely to approach a comparable level soon. It’s also possible that intelligence agencies, for instance, already have AI models with similar capabilities. Whatever the situation is, the developers would be wise to go full steam ahead to fix the vulnerabilities.

As always in such situations, it’s unclear whether the vulnerabilities that were discovered are unknown only to the developers or to the entire world. Many zero-day vulnerabilities are kept under wraps and fetch high prices on the black market. This market is not only used by criminals, but also by intelligence agencies and the police (I’m talking government-sponsored malware). If we assume that many of these vulnerabilities are already known in the underground, perhaps even with working exploits, then this AI tool should lead to improved security.

Is there actually a ban?

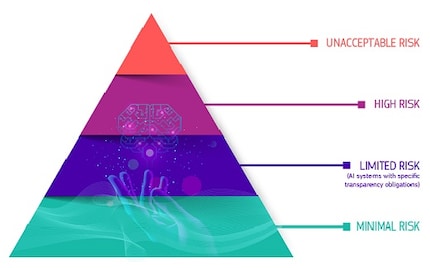

And finally, there’s the question of government regulation. The EU’s AI Act classifies AI applications into different levels of risk. The tools currently available to ordinary users are categorised as examples of the second-lowest risk level – «limited risk» – which involves no requirements other than a transparency obligation. But this situation might be different. I’m no lawyer, but as I understand it, AI that can be used to attack critical infrastructure without requiring specialised knowledge falls under the category of «unacceptable risk» – which would result in a ban.

Source: digital-strategy.ec.europa.eu

My interest in IT and writing landed me in tech journalism early on (2000). I want to know how we can use technology without being used. Outside of the office, I’m a keen musician who makes up for lacking talent with excessive enthusiasm.

Interesting facts about products, behind-the-scenes looks at manufacturers and deep-dives on interesting people.

Show all